Everyday Applications of Artificial Intelligence

Anyone who’s read our three-part series of introductory articles can already imagine how Artificial Intelligence solutions are built in everyday life, in the world of business and in fields of industry. The use of AI mainly focuses on how to generate real value from data and how to get from gathering information to making real decisions.

Every phase of this process can be uniquely supported by technologies collectively called Artificial Intelligence. The term “artificial intelligence” can be quite misleading, since narratives well known from sci-fi stories suggest it means “artificially” created “intelligence” through design, the ability to think, and ultimately, a machine solution that copies the functioning of the human mind. This is a purely theoretical concept, kind of the holy grail of modern science, similarly to quantum computers or cold fusion. Such a discovery would raise human existence to a whole new dimension.

But AI already has applications that are more or less everyday uses. How is that possible? Because experts define the notion of AI completely differently than sci-fi writers. It is by no means about imitating human thinking. Its importance lies in the ability of a software to solve problems in a way that's reminiscent of the human decision-making process:

That is, if A happens, I follow up with B, but if C happens, I proceed with D.

This is due to the fact that computers have become much faster, while target hardware solutions developed and optimized for Machine Learning have become more widespread.

It's also important to note that researchers made more and more empirical observations on the effectiveness of certain algorithms in the recent years. The reason behind why exactly these algorithms work, in many cases, is still an active area of research.

AI is called Artificial “Intelligence” because machines equipped with it might even be able to perform tasks that are reminiscent of processes that only the human mind has been able to do until now: perception, learning, and responding to the environment.

This laid the groundworks for, for example, the possibility of reinforcement learning. The software can experiment with solutions and observe which decisions (or rather choices) lead to what results. It can then continuously optimize its own workflow to reach the goal in fewer and fewer steps (more efficiently) or to get better and better results.

Smart Televisions, Intelligent Laundry Detergents

So sci-fi fantasies should be immediately excluded from the list when it comes to the real possibilities of AI, at least for now. The term “intelligent” has become as commonplace as “smart”. What does it really mean when a TV, a phone, or any home appliance is labelled “smart”?

It merely refers to their ability to record, pass on, receive and interpret data, i.e. to communicate, distinguishing them from their older counterparts that didn't have an internet connection yet.

How can a laundry detergent be marketed as “intelligent”? Because thanks to its chemical composition, it aggressively gets rid of dirt while being gentle to fabric – that’s the promise at least. Therefore, the detergent can “make a decision”: Here's a grease stain, I'll dissolve it; but this is cotton, so I'll leave it alone.

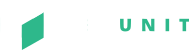

Fortunately, Artificial Intelligence is already much more sophisticated than that. The so-called “neural networks” are capable of high complexity. Sure, they’re not as complex as the human nervous system they’re named after, but at least they resemble it in their principles and structure.

The precondition and fuel of Artificial Intelligence is digital data. There are many ways to generate primary data, the most typical being a sensor, such as a digital thermometer: it measures temperature and records a position on the scale in the form of a digital signal. But what can AI do with this data? Here are some examples of its common uses today:

· Chatbots

· Image Recognition

· Intelligent Robots

Chatbots

Today, most internet users have already came across a chatbot, an automatic messaging program. While browsing a company’s website a chat window opens in which the visitor is addressed by “someone”. Since there’s a good chance that you can determine what the goal of the majority of visitors is (e.g., making inquiries, shopping or arranging administrative affairs), knowing typical visitor behavior patterns, you can easily offer them these services in the form of a message, and after a few rounds of questions and answers, you can likely provide them with useful help. And, of course, in case the complexity of the conversation exceeds the capabilities of the chatbot, a customer service employee can take over the wheel. For example, nowadays in the United States, where regulations are typically less strict, even serious banking services can be used via chatbots, such as initiating a transfer, viewing account history or setting up an automatic transfer. Within a few years, chatbots can become an everyday feature at plenty of businesses where the needs of customers can be efficiently determined, such as food delivery orders, routine servicing of vehicles or even booking an appointment for a haircut. It would replace a repetitive, time-consuming workflow, giving people more time to perform valuable business development tasks.

Image Recognition

Google's image recognition function is familiar to anyone who's ever looked up an image online. We can ask the browser to search for similar images and get amazing results. Not only can you find the exact same image in several versions, but the system might also bring up images with similar colors and shapes.

Google Image Recognition, however, is just the tip of the iceberg. Besides digital still images, we can use live camera footage, recordings, scanned documents and anything else that consists of pixels. Self-driving cars continuously analyze the recording of on-board cameras to function properly. In the field of industry, quality assurance tasks can be done by computer operated cameras instead of humans, because if we teach machines how a perfect product looks like, it will recognize errors. In the third part of our series about the phases of AI application , we elaborated on the already existing uses of industrial image recognition.

Typical fields of applications of image analysis are healthcare diagnostics, the analysis of X-rays, ultrasound images, or even the smallest details of heart sound patterns. Of course, we cannot substitute the expertise of medical professionals, but image analysis can be a useful tool for them, e.g., to pre-screen patients' records, identify straightforward cases or sort documents along certain parameters so that doctors only have to deal with essential matters. These are already successful supplementary solutions.

Intelligent Robots

If Artificial Intelligence can already be used for messaging, maybe simple bureaucratic tasks might worth a try as well? Of course, an investment management company called BNY Mellon entrusted digital robots, i.e., software assistants with securities settlement and data request tasks. The experiment was a great success with almost 100% accuracy and an extraordinary increase in efficiency:

- the securities settlement workflow has been reduced from 10 minutes to a quarter of a second

- the average duration of data requests has been reduced from 6-10 days to 24 hours

These tools and solutions can produce tremendous value, and unlike machines with human thoughts, it's no longer just science fiction. Artificial Intelligence is not a digital butler or secretary yet, but it can already provide effective assistance in many subtasks. There are truly useful tools and services, which didn’t even exist 3 or 4 years ago, in business development, the optimization of industrial production lines, our private lives and almost any other field. Want to know how artificial intelligence can help your company or business? We offer turnkey solutions as well as customized programs. Contact us with your questions here!

Are you interested in starting a similar project but would like to ask some questions first?

Make the most of our free consultation service and contact us today. Click here.